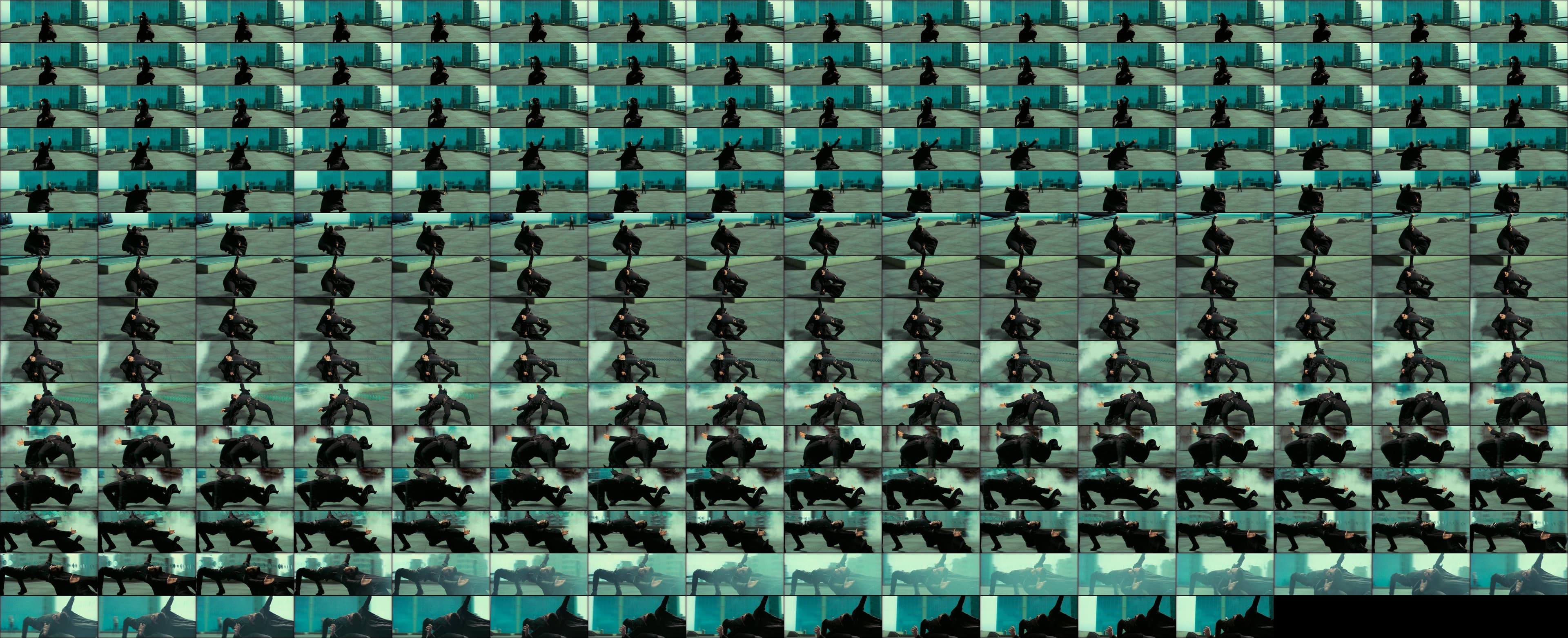

Bullet Time (Online) is a Gaussian splat reconstruction of 237 frames of the bullet time sequence from The Matrix (1999). I took the frames from the Blu-ray, ran them through a structure-from-motion pipeline, and got back a navigable gaussian splat of a place that only existed in the Matrix. A roundtrip from CGI to celluloid and back to CGI.

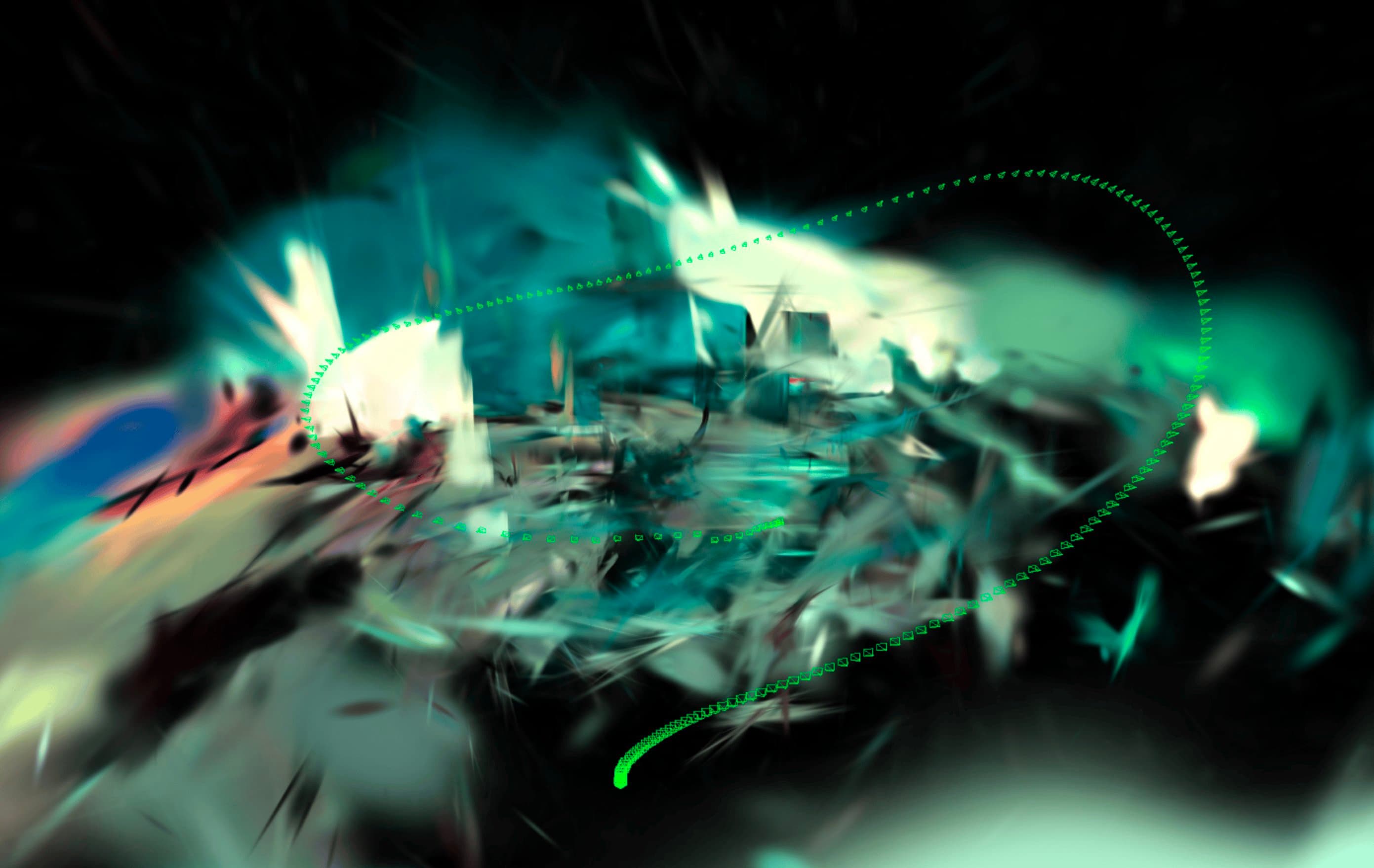

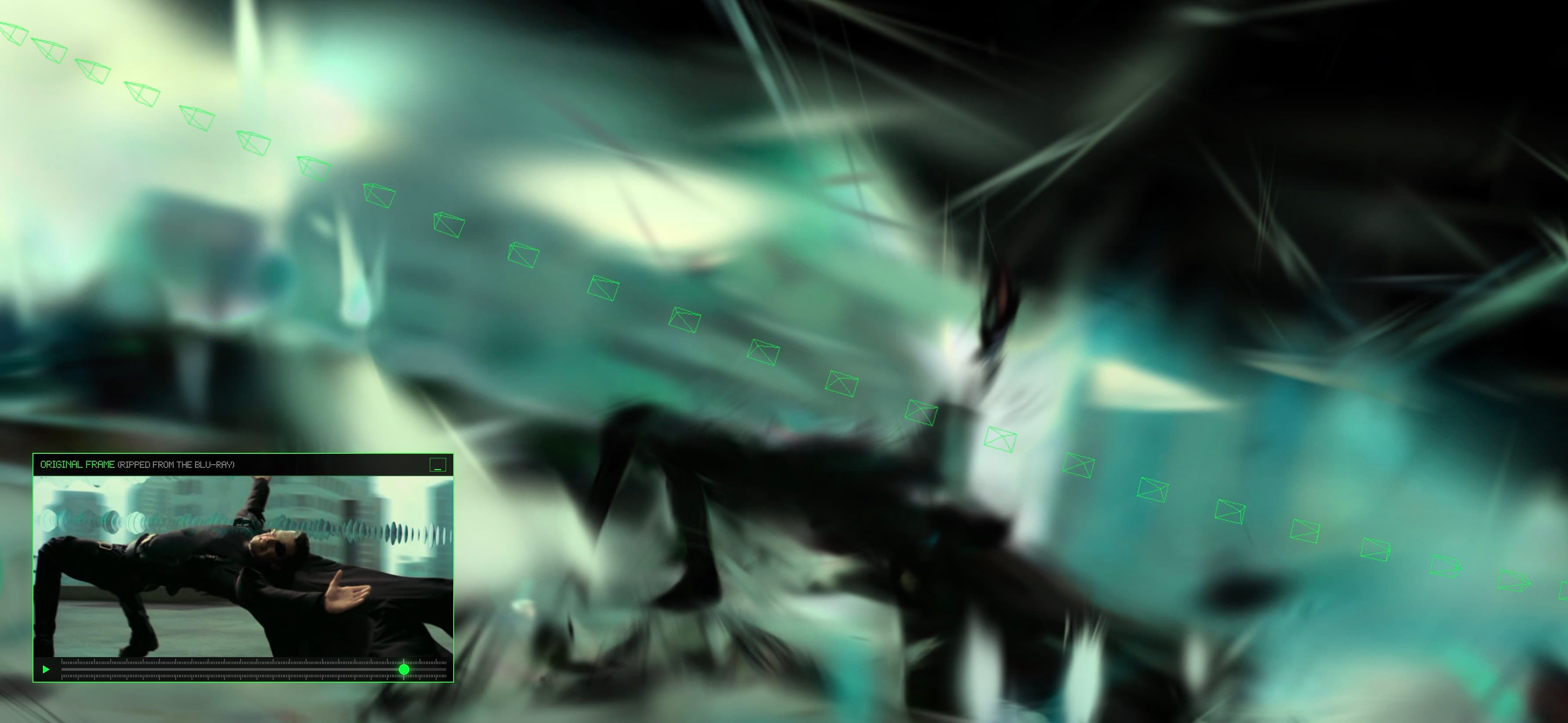

The reconstruction is not clean, and that's the point. Neo's body was moving and therefore reconstructed with duplicate smeared splats all over the place. The rooftop holds together in some places and dissolves into floating color in others. If you stay on the original camera path, it looks roughly like the film. The moment you orbit off that path, the illusion falls apart. You see the back of geometry that was only ever meant to face one direction. You see gaps where no frame had any information. The splat is an honest object: it shows you exactly where 237 frames of cinematic illusion agree on a geometry, and where they stop agreeing.

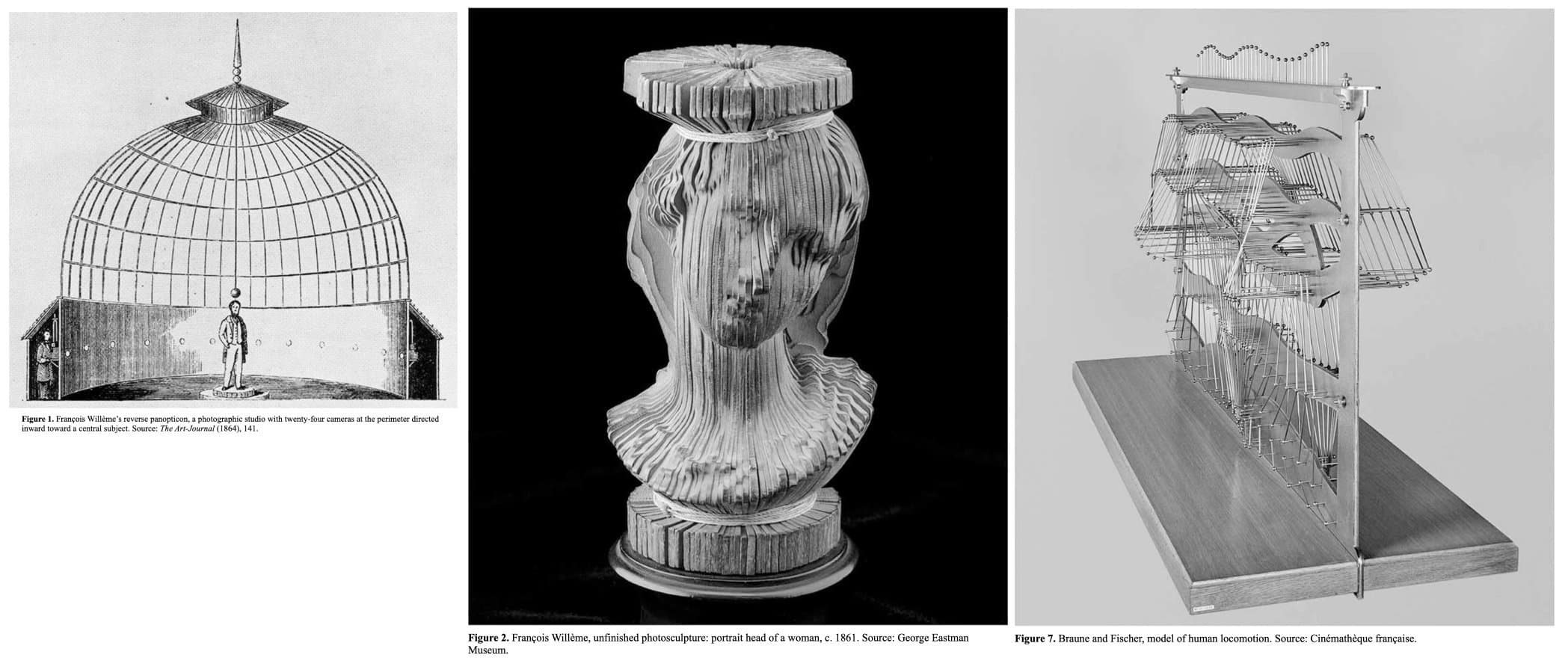

Most people remember bullet time as a 1999 special effect. The technique is older than cinema. In the 1860s, François Willème arranged twenty-four cameras in a circle around a subject and used the photographs to carve a sculpture. Thirty years later, Braune and Fischer did the same thing with moving bodies, triangulating limb positions in 3D space. The Wachowskis simulated the whole array digitally. Same operation every time: surround a subject with cameras, reconstruct what no single viewpoint can see. Willème had a sitter. Braune and Fischer had a body in motion. The Wachowskis had a render, and it worked because the filmstrip never needed to be spatially consistent. This project adds a fourth term: a reconstruction pipeline that finds neither a real body nor a real place, and reports back on what it found instead.

Source Material

I extracted the 237 frames at 4K resolution from the 2009 Blu-ray release of The Matrix. About ten seconds of footage, the full rooftop bullet dodge. Each frame becomes a discrete "photograph" for the reconstruction pipeline.

Pipeline

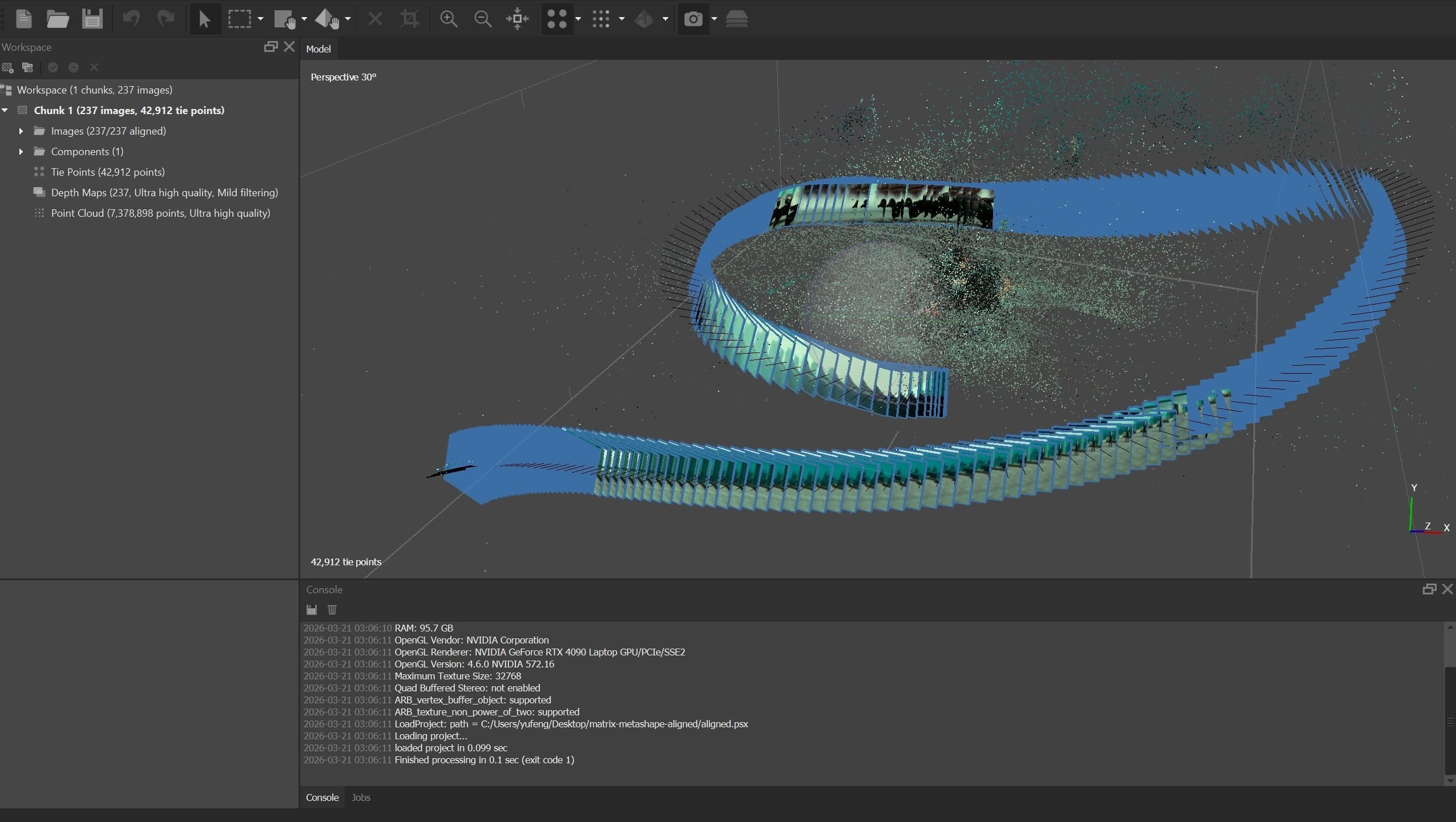

Camera Alignment

I started in 2022 with Metashape (Agisoft), which uses COLMAP under the hood to estimate camera positions in 3D space. The problem is that bullet time is not a static scene. Neo is dodging and bending through the frames. Standard photogrammetry expects a rigid world. This is a moving subject shot from a moving virtual camera. I had to loosen the matching parameters significantly to get the 237 frames to align at all.

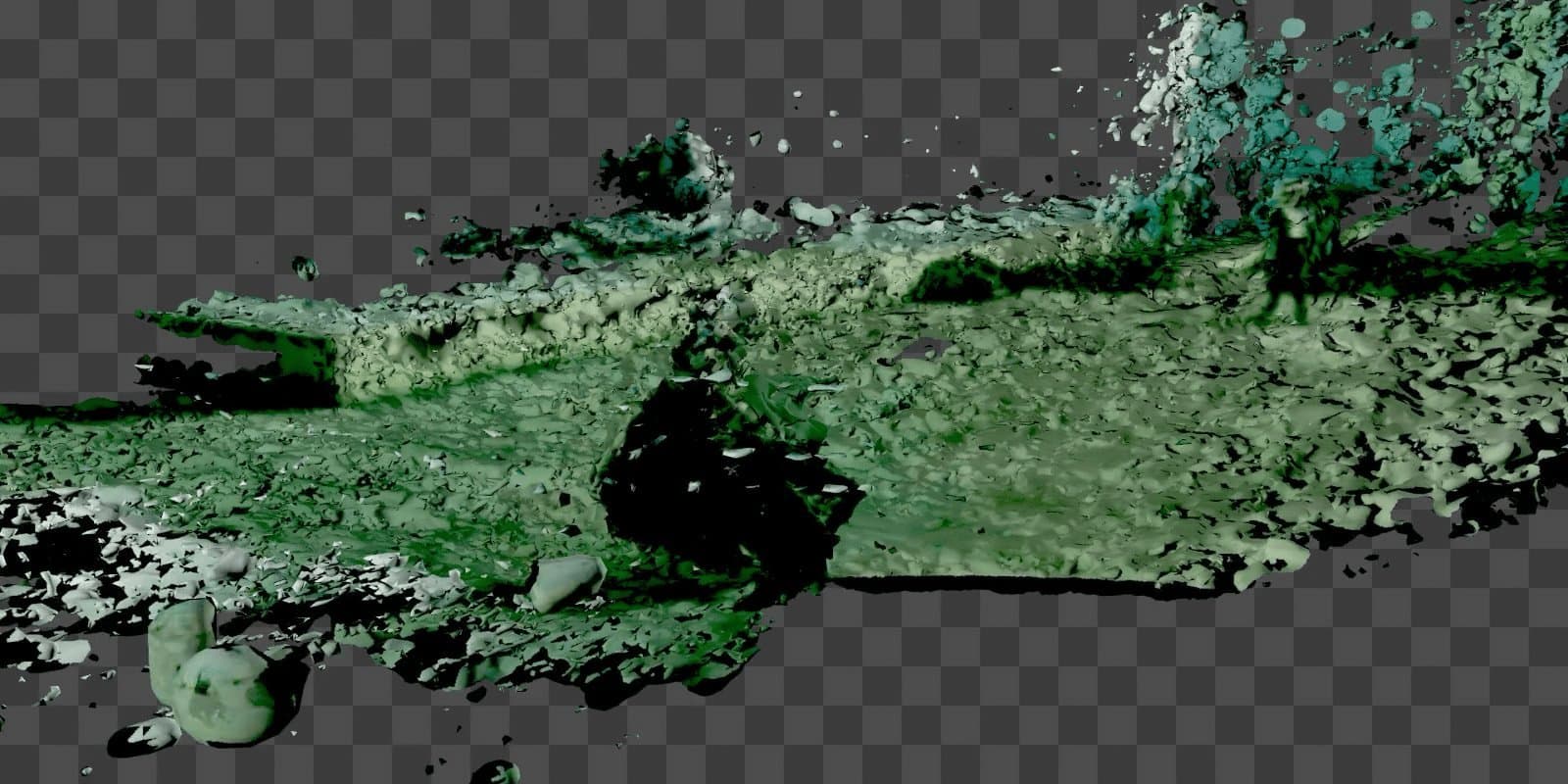

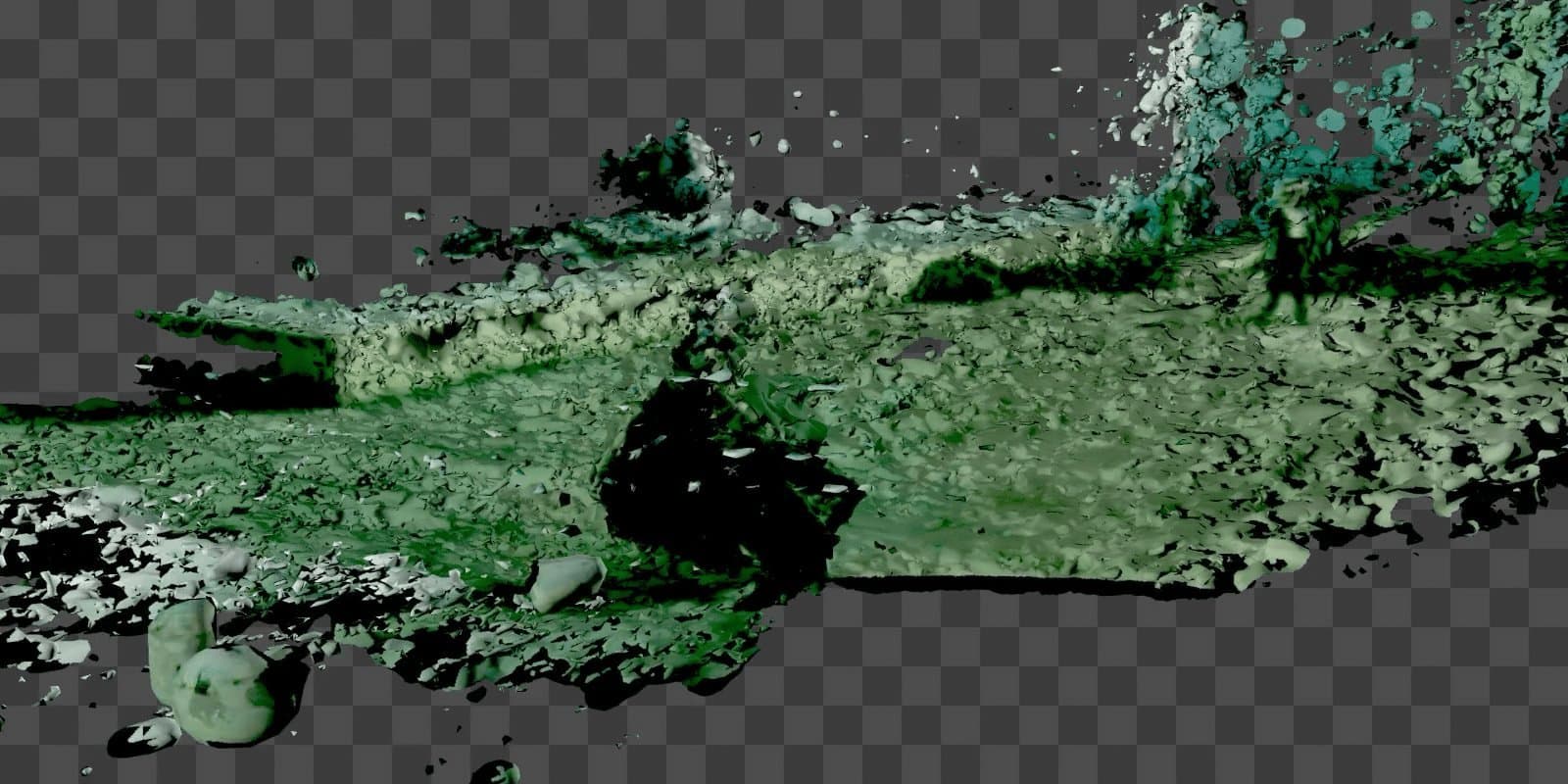

Failed Mesh (Photogrammetry)

The first attempt was a conventional photogrammetric mesh, and it failed badly. Neo's body smears in to a black blob and the mesh is generally confused with the inconsistencies across frame. Photogrammetry needs the world to hold still but this scene just didn't.

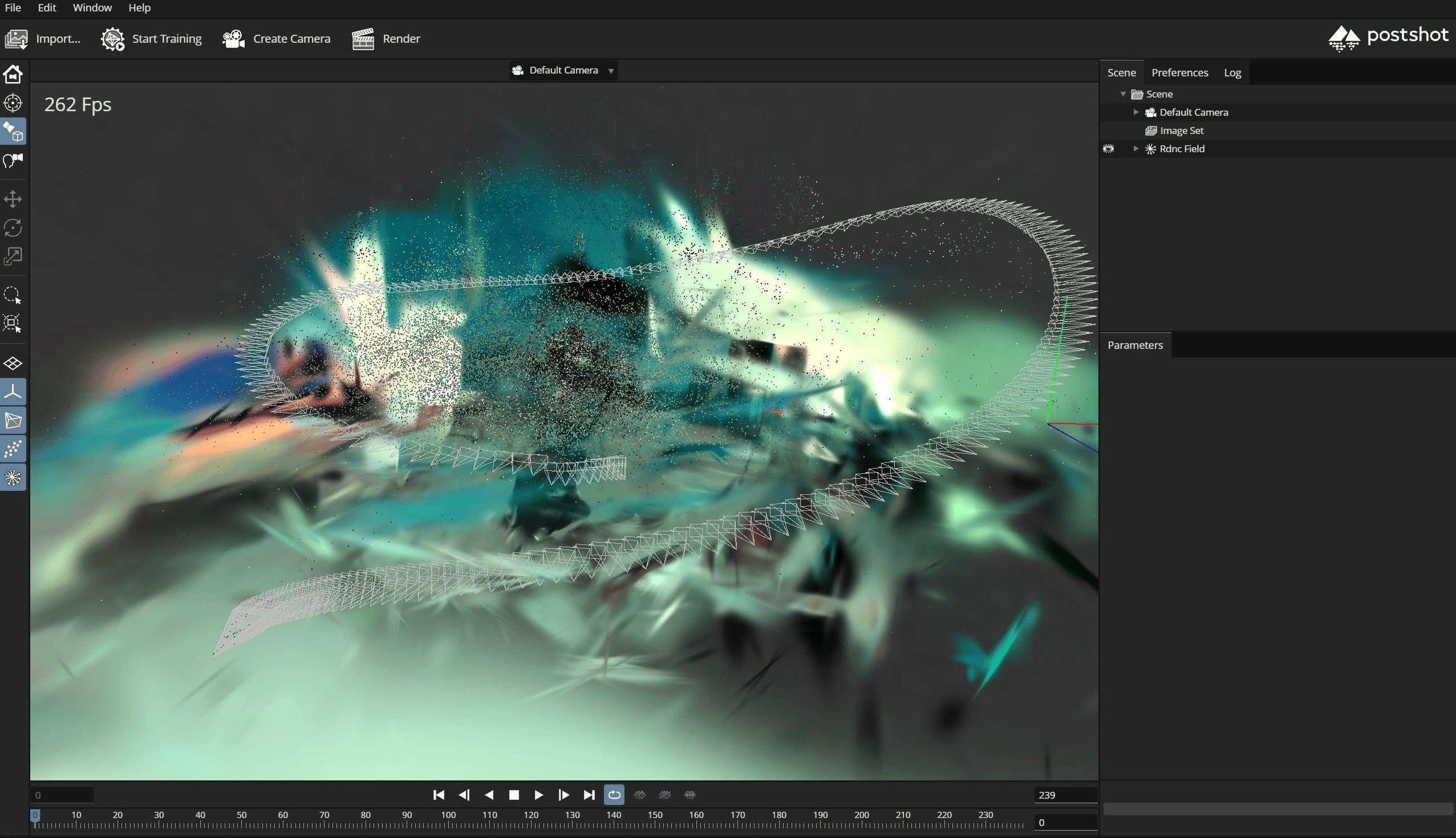

Gaussian Splatting

In August 2023, Kerbl et al. published 3D Gaussian Splatting for Real-Time Radiance Field Rendering. Instead of triangulated meshes, Gaussian splatting represents a scene as millions of soft, overlapping ellipsoids. The format is far more tolerant of inconsistency. Where a mesh requires agreement on surfaces, a splat can hold contradictory information. The same region of space can contain overlapping gaussians encoding different frames' claims about what belongs there.

I saw the opportunity immediately. In 2024, I re-ran the same COLMAP camera alignment through Postshot and the scene came together. Not clean, but one honest representation that doesn't omit the complexity.

Delivery

I compressed the splat into PlayCanvas's SOG format (~3 MB, roughly 90% smaller than the raw PLY) and render it in real time in the browser. You can orbit freely, scrub through the 237 original camera positions with a slider and lock onto any of the reconstructed camera angles. The raw splat PLY and COLMAP camera data are available to download on GitHub.

Viewer

References

- Lana and Lilly Wachowski, The Matrix (Warner Bros., 1999)

- New World Designs, Creating the Matrix Bullet Time Effect

- Ian Failes, VFX Artifacts: The Bullet Time rig from 'The Matrix' (befores & afters, 2021)

- Alexander R. Galloway, Uncomputable: Play and Politics in the Long Digital Age (Verso, 2021)

- Jussi Parikka, The Photosculptural Hypothesis (Machinology, 2012)

- Jeremy Norman, François Willème Invents Photosculpture: Early 3D Imaging (History of Information)

- MessyNessy, More than 100 Years before 3D Printers, We had Photosculpture (Messy Nessy Chic, 2022)

- Bernd Kerbl, Georgios Kopanas, Thomas Leimkühler, George Drettakis, 3D Gaussian Splatting for Real-Time Radiance Field Rendering (SIGGRAPH, 2023)

- PlayCanvas, SOG: Self-Organizing Gaussians format